Monitoring Bot Traffic for Faster Performance, Higher Revenue & Better SEO

Yes, I know. Bots can be a real pain. They can skew your analytics. They can purchase those Foo Fighters tickets before you (that’s a story for another day). And worse, they can inject viruses, commit DDoS attacks and make your site slower.

But some bots are good, like…

- Search Engine Bots: Ensures search results are updated and ranked accordingly.

- Commercial Crawlers: Collects information on things like products, pricing, advertising and keywords.

- Feed Fetchers: Retrieves data to be shown on feeds like Facebook and Twitter.

- Monitoring Bots: Monitors web performance from various locations and devices.

Graphic from Incapsula.

So with good bots and bad bots comprising 52% of total internet traffic in 2016, it’s important to monitor their activity on your site for three reasons:

- Large amounts of bot traffic can impact your end-user performance, especially in areas where the bots originate.

- Slow-downs from bot traffic can hurt your revenue.

- Search engines rely on bots to crawl your site, and the speed of their experience matters to your search rankings.

Monitoring Bot Traffic for Faster Performance and Higher Revenue

Bot traffic can create a high load on your site’s servers, slowing down server-side response times. This results in delays for your end users, especially in the geographic regions where an influx of bot traffic is present.

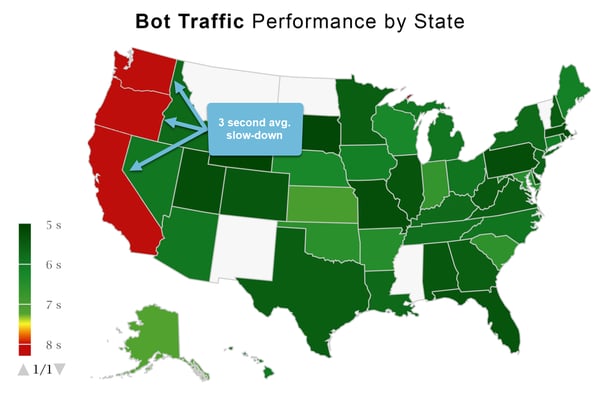

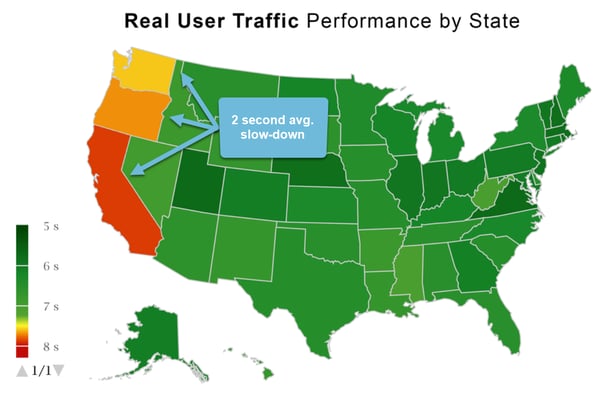

One of our retail customers had a large amount of bot traffic coming from Washington, Oregon and California.

The amount and frequency of bot traffic slowed down their back-end systems. This impacted bot traffic performance by 3 seconds and real-user performance by 2 seconds on the west coast.

Our Real User Monitoring technology calculated that the 2 second real-user slow down cost the retailer $15.3K per day before the bots were stopped at the firewall.

This could be happening on your site right now. Avoid performance pains and achieve faster performance and higher revenue by doing these things:

- Have a strategy in place to block bot traffic. Many CDNs offer this service, as well as companies like Incapusla and Distil Networks.

- Monitor performance and page views by geography in real time so you can quickly catch issues.

- Be sure you're collecting relevent traffic identifiers (e.g. IP addresses, user agent strings) so you can pinpoint the source of the bots.

Monitoring Bot Traffic for Better SEO

In 2010, Google announced that site speed is considered a factor in search rankings.

In their patent for Using Resource Load Times in Ranking Search Results, Google states:

"A search result for a resource having a short load time relative to resources having longer load times can be promoted in a presentation order, and search results for the resources having longer load times can be demoted."

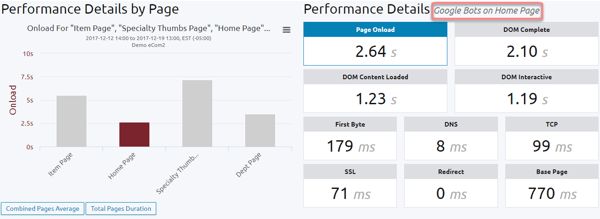

With Blue Triangle, you can monitor the experience of specific bots on your site.

The image below shows the path Google bots took and their experience on the home page:

Here are a few ways you can improve search engine bot experience and your SEO:

Image Optimization

Reducing images down to 200KB or less can dramatically decrease load time. You can even use tools like TinyPNG to remove unnecessary data from images. JPEG is your best bet, as all browsers support it.

Reduce the Number of Files Loaded

Reducing HTTP requests will speed up your site. You can use CSS Sprites, which combines images together on one file to improve site performance.Reduce the Number of Plugins

Chances are, you have plugins that aren’t necessary. Delete these and not only will your site be more secure, it will load faster.Key Takeaways

- See where your bot traffic is coming from and measure its impact on real-user performance. That way you know whether or not it's actually a problem.

- It’s all about revenue. Bot traffic can slow down your site and impact your revenue. Blue Triangle can measure that impact.

- To optimize your SEO, focus on the web pages where search engine bot traffic is experiencing slow load times.

Bot traffic could be impacting your revenue right now. Contact us, we're happy to help.

During the holiday rush, every shopper matters

Optimize the customer journey before the eCommerce event of the year.